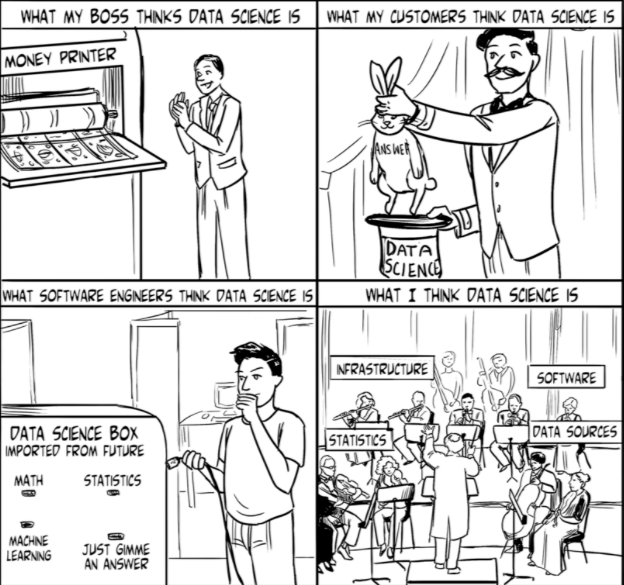

Almost everyone believes they know what data science is until you ask them what it is:

Content

Why Data Science

Until recently data science, and business analysis, were sometimes used interchangeably. In fact, it is difficult for you to distinguish between the roles of a business analyst and a data scientist in a small organization .

In today’s [business] world of bigger data and cheaper computing, think of data science as business analysis on steriods, serious steriods.

What is the difference between the two?

Compared to business analysts, data scientists has advanced technical expertise in areas such as system engineering, computer programing, and statistics.

The business analysts depends on human decisions and intuitions that experts are considering inadequate and puts businesses at a disadvantage compared to the data-driven facts data scientists generate.

Moreover, business analysts focus on one perspective, for example, the events that worked in the past. Data scientists, on the other hand, focus on a more fluid analysis, that is, future events.

Data scientists explore and discover patterns that enable business executives in your organization to make big decisions informed by big data. Data scientists enable businesses to utilize large data in executing certain functions and processes, while focusing on performance and operation.

Put differently, data science enables the conversion of valuable data into actual business value.

This entails translating patterns and insights contained in the data into language businesses can understand. Some advanced data science are looking for ways to use predictive analysis to deal with the challenges businesses face.

The main advantages of data science to your organization includes:

- Assisting executives in the marketing and sales departments in understanding the most profitable products based on factors such as season, region and customers among others.

- Data scientists can assist retailers with staffing plans in seasonal businesses.

- Data scientists keep tract of any anomalies or rare explosions in financial transactions that may be a sign of fraudulent activity.

- The analysts also help with the refining of standards and metrics report to meet the needs of the executives looking for support for their decisions.

- Lastly, data scientists perform market research and keep track of external trends that affect specific products such as demographic segments and geography.

The Complexities of Data Science

The complexities and sides of data science are evident in the three steps of data work that include collection, refinement and delivery of data.

In the collection stage, data scientists often face a challenge posed by the sheer size of data, and this is why most current databases are designed for the rapid collection, storage and retrieval of information.

However, if large volumes of data are collected rapidly, mounds of disorganized data may be generated. Data refinement is needed to make the data usable.

In the process of refinement, data is converted into answers that address specific questions. This means that big data is broken down through refinement.

Data refinement is one of the most crucial steps in data operation because it enables the standardization, categorization, summation, and tagging of data. Data refinement is done through statistical modeling that turns piles of disorganized data into usable answers.

Once data are analyzed to answer specific questions, data scientists make the answers available in proper format through an optimum channel.

Data delivery can be done using different channels depending on the aim of your organization.

It is important to note that the role of data science analysts has changed. In the past, data science was linked to specific technologies and the complex process involved in SQL queries.

Modern data science analysts convert a request for information into questions and models self-serve tools can use. Think of how Google shows you searches only relevant to you

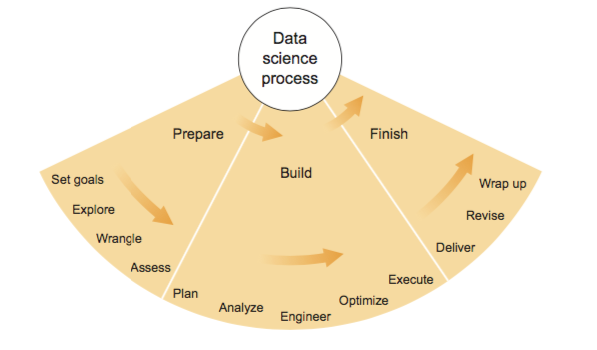

The Data Science Process and Workflow

Image Credit: Think Like a Data Scientist, Brian Godsay

Some of the questions data science analysts should seek to answer include the specific goals of the exercise, the aim of gathering the data, and the factors to be estimated or predicted.

The data science process refers to the framework used to execute data science tasks. The process starts by asking an interesting question.

The second stage in the data science process is data collection. The key questions that data scientists ask at this stage include the data for sampling, what data is relevant, and what are the privacy issues to be considered.

The third stage in the process is data exploration. During this stage, data are plotted, and anomalies and patterns are identified.

The fourth stage involves data modelling, and data scientists perform activities such as building models, fitting models and validating models.

The last stage in the data science process entails communicating and visualizing the results. The questions, data scientists answer at this stage, include what lessons were learned, what is the meaning of the results, and what patterns do we draw from the data.

Data science is a complex process that requires extensive skills and tools to execute. One of the most commonly used data science process is CRISP-DM (Cross Industry Standard Process for Data Mining).

The key steps in CRISP-DM include business understanding, data understanding, data preparation, modeling, evaluation, and deployment.

From the steps, it is evident that the processes used in data science are the same regardless of the approach used. All the approaches to data mining start by asking a question or examining the insights to a specific phenomenon.

The KDD (Knowledge Discovery in Databases) process is another popular approach to data science. This framework uses specific data mining approaches to discover and extract patterns.

The key steps under this framework include selection, pre-processing, transformation, data mining, and interpretation. If the concept data mining is equated to modeling in the CRISP-DM, then the steps in the two approaches are similar.

Tools for Data Science

The most common tools for data science include R, Python, and Matlab, which are very crucial for model development and visualization. R is commonly used in mathematical analysis and in displaying results.

R comes with numerous extension packages that enable data analysts to perform specialized operations that include text and speech analysis in addition to genomic analysis.

R is popular among analysts in industries such as health care, finance, pharmaceutical development and business.

However, python and python tools are the most commonly used, and data scientists who masters these languages have an edge.

PYTHON VS R

Coming from a software engineering background, I prefer python.

The biggest advantage is:

Python is a general-purpose language with a flat learning curve, which increases the speed at which you can write a program.

While R is very popular in the academic world, and has a lot of packages to solve common problem, most of the common use-case for data science is in SaaS (Software As A Service). Python beats R hands-down.

TOP PYTHON DATA SCIENCE PACKAGES:

If you’re looking to kickstart Data Science in Python, here are some key python packages solving today’s data science challenges:

- Numpy — powerful for its n-dimensional array, linear algebra transformations, fourier transforms, advanced random numbers generations

- statsmodels — for statistical model. We can use this to explore data, estimate statistical models, and perform statistical tests. Lots of descriptive statistical analysis

- Scipy — this is built on Numpy. Useful for high level science and engineering module (extends the Fourier transforms, linear algebra, optimization and sparse metrices)

- Matplotlib — plotting all types of graphs from histograms, line plots, to heat plots.

- Pandas — for structured data operations and manipulations. This mostly used for data mungling and prep.

- Scikit Learn — primarily for machine learning built on Numpy, SciPy and matplotlib

- xlrd — for reading and writing Excel files

- PyMC — For Bayesian statistics, especially Markov chain Monte Carlo sims

- ray — the r-function made available on Python

- Seaborn — for statistical data visualization. For making informative and attractive graphics, based on Matplotlib

- Bokeh — for interactive plots, dashboards, and data applications on the web.Looks and acts very much like D3.js

- Blaze — extends Numpy and Pends to distributed and streaming datasets. Can be used to access data from multiple different sources

- Scapy — for web crawling

- SymPy — for symbolic computations. From the basic algebra to quantum physics

- Requests — for accessing the web. Very similar to urllib2, and much easier to code

Top Resource to Learn Data Science

LEARNING PYTHON LANGUAGE

- Learn Python Codecademy

- Python 3 in one picture

- Dive Into Python This is expecially good for experienced programers

- Learn 90% of Python in 90 Minutes

DATA SCIENCE WITH PYTHON

- Statistics and Data Science

- Python for Data Science: Basic Concepts

- Web scraping in Python

- Python For Data Science — A Cheat Sheet For Beginners

LEARNING PANDAS

- Intro to pandas data structures

- 10 minutes to Pandas

- “Large data” work flows using pandas

- Pandas Basics Cheat Sheet

Machine Learning with Python

- Data Normalization in Python

- Machine Learning Algorithms Cheatsheet

- Machine Learning with scikit learn

- Python Machine Learning Book

Learning SciKit

- A Gentle Introduction to Scikit-Learn: A Python Machine Learning Library

- Machine Learning with scikit learn tutorial

- Code example to predict prices of Airbnb vacation rentals, using scikit-learn on Spark

- Introduction to machine learning with scikit-learn, Videos!

Hope you got a look into the interesting and exciting world of data science.

Just ask away if you have any piece that needs clarification.